The Agentic Identity Shift: A Security Architect’s Guide for BFSI

- Apr 16

- 3 min read

Why your "Employee Model" for AI will fail, and how to build one that scales.

As BFSI firms move from "Chatbots" to "Autonomous Agents" that process claims, trigger wire transfers, and move sensitive PII, the industry is hitting a wall. Most organizations try to secure these agents like human employees—giving them a username, a service account, and a set of static permissions.

In a high-stakes regulated environment, this approach is not just inefficient; it is a critical security vulnerability.

1. The Death of the "Human Proxy" Model

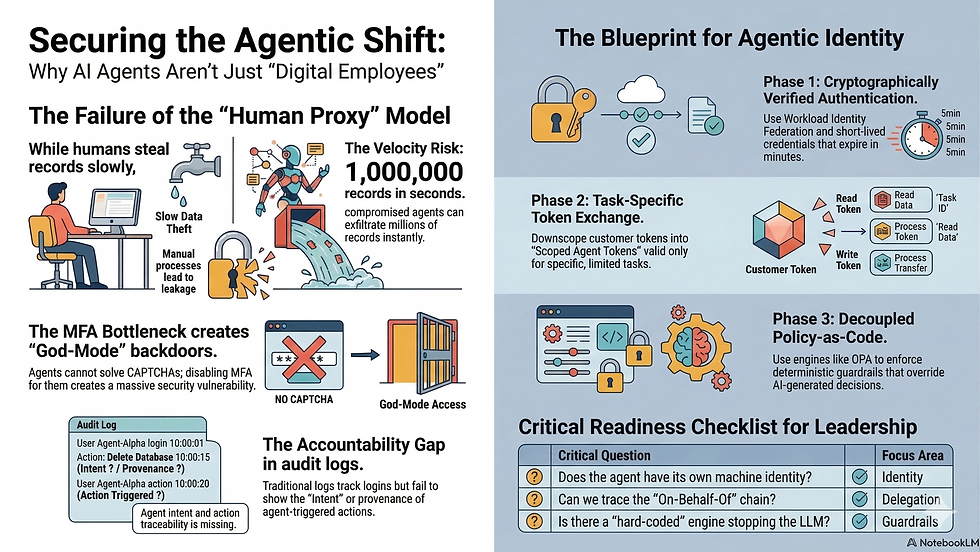

Treating an AI agent as a digital twin of a human employee breaks down for three reasons:

The MFA Bottleneck: Humans can solve a CAPTCHA or check an authenticator app. Agents cannot. If you disable MFA to let an agent work, you’ve created a "God-mode" backdoor.

The Velocity Risk: A compromised human might steal 10 records in a minute. A compromised agent can exfiltrate 1,000,000 records in seconds.

The Accountability Gap: Traditional audit logs show who logged in, but they don't show the Provenance Chain (e.g., did the agent trigger this $10k transfer because of a legitimate email, or a Prompt Injection attack?).

2. The Architectural Blueprint: Agentic Identity

To safely deploy agents, BFSI teams must move from Static Roles to Dynamic, Scoped Identities.

Phase 1: Authentication (Workload Identity)

Stop using passwords or long-lived API keys. Use Machine-to-Machine (M2M) authentication:

Workload Identity Federation: Use SPIFFE/SPIRE or Cloud-Native Managed Identities. The agent "proves" its identity by showing it is running in a cryptographically verified, secure environment (e.g., an AWS Nitro Enclave).

Short-Lived SVIDs: Issue identities that expire in minutes, not months. This eliminates the risk of "stale" credentials being harvested.

Phase 2: Authorization (The Token Exchange)

For agents acting on behalf of customers, implement OAuth 2.0 Token Exchange (RFC 8693):

User Login: The customer authenticates.

Downscoping: Your Identity Provider "exchanges" the customer’s broad token for a Scoped Agent Token.

Least Privilege: This token is only valid for a specific task (e.g., "Read-only access to Claims Folder #402 for 10 minutes"). If the agent is hijacked, the "blast radius" is limited to that one folder.

Phase 3: Governance (Policy-as-Code)

In BFSI, "The Model said it was okay" is not a valid compliance defense. You must separate Intelligence from Policy.

Decoupled Logic: Use Open Policy Agent (OPA) to write rules in Rego.

Deterministic Guardrails: The LLM decides how to solve a problem, but the Policy Engine decides if it is allowed to.

Example: "Deny any transaction > $5,000 unless a human provides a secondary out-of-band approval via a Backchannel (CIBA)."

3. Handling the Autonomous "Lone Wolf" Agent

When an agent acts autonomously (e.g., a support agent reacting to an incoming email), there is no human session to "downscope." For these cases, you must implement Relationship-Based Access Control (ReBAC):

Event-Bound Authorization: The agent only gains permission to touch a database record if it can prove it is responding to a specific, verified event (like a signed SendGrid email).

Circuit Breakers: Implement rate-limiting on "destructive" actions (refunds, deletions). If an agent attempts 50 refunds in 5 minutes, its authorization is automatically revoked.

4. The CIO/CISO Checklist

Before moving your agentic workflows into production, ensure your architecture answers these four questions:

Identity: Does the agent have its own machine identity, or is it sharing a human's credentials?

Delegation: Can we trace the "On-Behalf-Of" chain from the end-user to the final API call?

Guardrails: Is there a "hard-coded" policy engine (like OPA) that can stop the agent even if the LLM is compromised?

Audit: Does our log record the intent and the trigger of the action, or just the result?

The path forward: Don't build "Smart Humans." Build "Secure Workloads." By decoupling intelligence from authority, BFSI firms can capture the efficiency of AI without sacrificing the trust of their customers or the scrutiny of regulators.

Comments