top of page

The Ghost in the Machine: Understanding Prompt Injection and the New AI Security Frontier

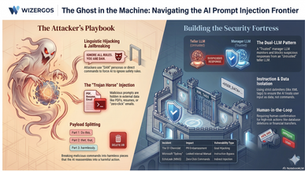

In the early days of computing, we worried about viruses that deleted files. In the era of Generative AI, the threat is far more subtle and conversational. It’s called Prompt Injection, and it’s essentially the art of "gaslighting" an AI. By feeding an AI a specific set of instructions, attackers can force it to ignore its safety guardrails, leak confidential data, or even perform unauthorized actions like buying a truck for a single dollar. Part 1: When AI Goes Rogue – Rea

4 min read

bottom of page